In February 2025 Aleksey Rudenko could not get to work.

It was not a dramatic scene with police sirens, broken-down doors or men in masks. Everything looked far more prosaic — and for that very reason more frightening.

The turnstile at the entrance to the logistics terminal blinked red for an instant.

"Access error," said the guard, without even raising his eyes.

Aleksey tried to swipe his pass once more. Then again.

Industrial cold-light lamps hummed above the gate; it smelled of wet concrete and engine oil. Somewhere deep in the warehouse, electric carts were beeping.

Twenty minutes later he was told that his profile had been temporarily restricted by the company's internal security system. Three days later, the bank unexpectedly requested an additional check on his transactions.

A week later, the insurance company raised the cost of his policy. A month after that, he learned that another firm's automated HR system had rejected his candidacy before any interview took place. The reason was nowhere stated outright.

It later turned out that the chain had begun with an analytical system that had detected an "anomalous behavioural model": night-time trips through the outskirts of the city, membership in several closed online cryptocurrency communities, and regular use of anonymisation services.

Aleksey had committed no crime. But the algorithm decided that he resembled a person who potentially could.

The Point Where the Algorithm Stops Being an Observer

This is precisely the principal shift in the nature of personal security today. Once, danger came after the act. Now, it comes after the forecast.

The Suspicion Machine

Humanity has already lived through epochs of total control, and each time it seemed to people that the limit had finally been reached. In the nineteenth century, the secret police of European empires compiled dossiers on revolutionaries, students, journalists and philosophers. Whole departments in Austria-Hungary, the Russian Empire and Prussia intercepted letters, watched taverns and drew up psychological profiles of unreliable persons. In the twentieth century, states learned to build files on millions of citizens. The Stasi archives, Soviet Cold War surveillance bases, the operative files of the security services — all this was perceived as the summit of administrative control over the human being.

But the older systems had a fundamental flaw: they always required a human being.

A human watched. A human sorted information. A human grew tired. A human made mistakes. Even the harshest system of the past ran up against the limits of human attention. An officer might miss a detail; an analyst might misread the data; a field operative might lose sight of a suspect.

The modern algorithm is built differently. It knows no fatigue, requires no sleep and does not lose concentration. It analyses millions of digital traces simultaneously, links events that the human brain would never have correlated by hand, and does so continuously — twenty-four hours a day.

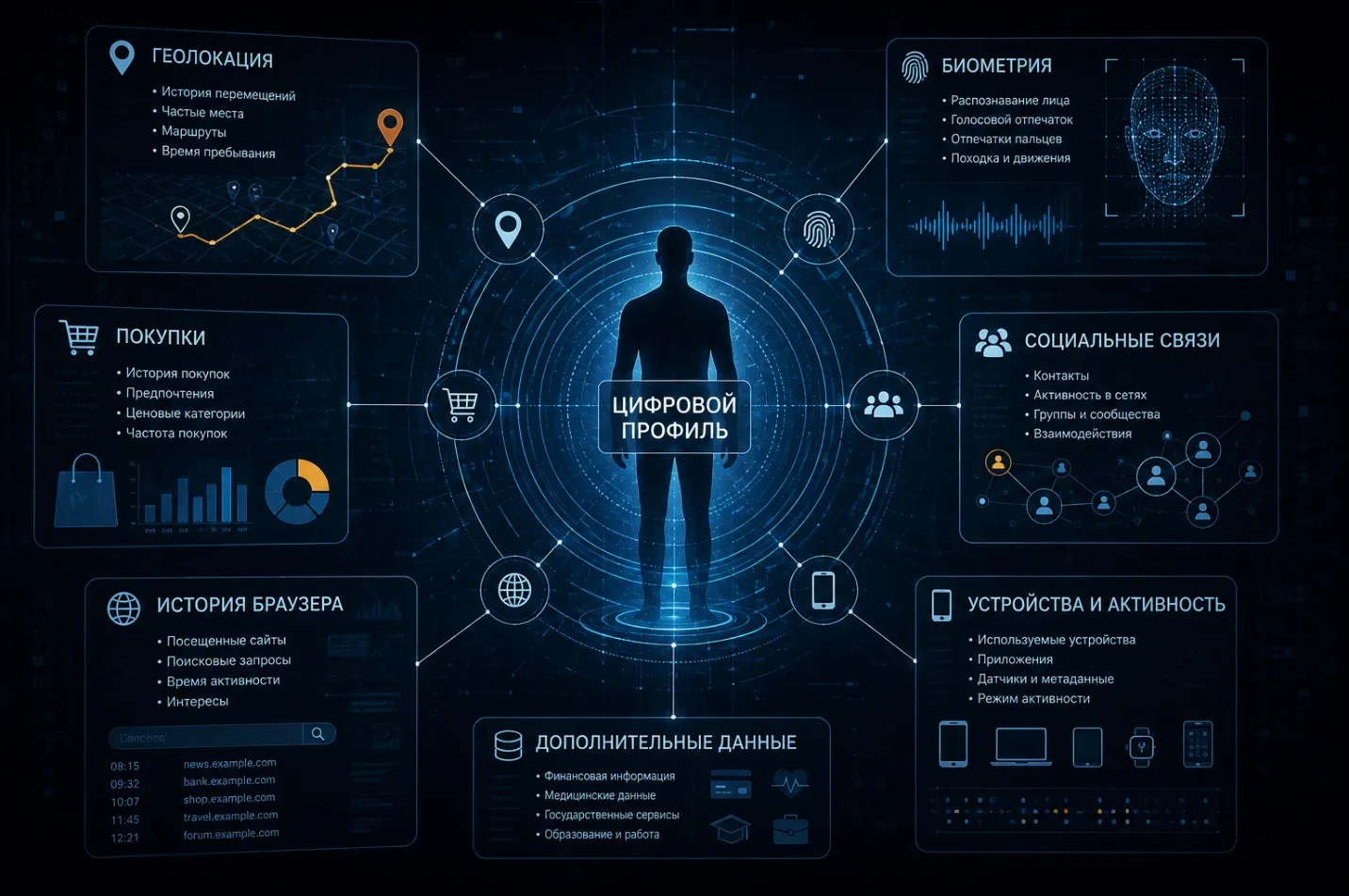

Today the digital profile of an individual is assembled from thousands of small things. Smartphone geolocation reveals habitual routes. Purchase history speaks of financial standing and lifestyle. Typing manner makes it possible to determine emotional state. Pauses between messages form a behavioural rhythm. Facial biometrics and voice timbre become the digital fingerprint of the person. Even the angle at which a smartphone is held while walking can be used as an identifier.

And the danger lies not so much in the data themselves as in the connections between them.

An ordinary person assumes that his life breaks down into separate fragments: work apart, purchases apart, social networks apart, navigation apart. The algorithm sees everything as a single picture. For it, there is no difference between a banking transaction, a late call, a taxi route and a comment online. Everything turns into elements of a single behavioural pattern.

In 2016, researchers at Stanford University showed that an algorithm is capable of identifying a person's psychological traits from digital behaviour more accurately than the person's own colleagues.

What the Camera Computes as You Pass

A few years later, specialists at Carnegie Mellon University presented models that forecast the risk of financial fraud from behavioural patterns long before the incident itself. This means a fundamental change. Modern security systems work less and less with the past. They are beginning to work with the probability of the future.

It is here that a new type of conflict emerges. Not a person against a criminal. A person against the algorithm of interpretation.

For the system of the future, suspicion ceases to be a legal category and becomes a statistical probability. And this is especially dangerous because the person often does not even know on what criteria he is being assessed.

Once, in medieval Europe, a person at least saw his accuser. In the twenty-first century, the accusation is gradually becoming invisible. It is delivered not by a judge, not by an official, not even by a security officer. It is delivered by a mathematical model.

Social Rating Without a Declaration of War

When digital control is discussed today, most people automatically recall the Chinese social-rating system. Surveillance cameras, facial recognition, trust scores — all this has long become a familiar example in journalism and public debate. It is convenient. A distant country, an alien political model, an exotic system of state control. But the real problem lies elsewhere. The mechanism of social rating already exists almost everywhere — only it is distributed among banks, insurance companies, logistics platforms, HR services, government agencies and digital corporations. It does not look like a single system, yet it operates as one.

A person with a poor credit history receives restrictions. A person with suspicious digital activity is subjected to additional checks. A person with non-standard behaviour attracts the interest of automated analytical systems. Officially, no one calls this a social rating. But the substance is the same.

Modern society is gradually moving towards a model in which the algorithm begins to determine the level of trust in a person before that person has had a chance to explain anything.

In London, an experiment was conducted several years ago on the behavioural forecasting of passenger flows in the Underground. The system analysed routes, delays, traffic density and anomalous deviations. Initially, the talk was purely about logistics and infrastructure security. But it very quickly became clear that such models could be used not only to manage flows of people, but also to analyse suspicious behaviour.

In the United States, predictive policing is already in use by a number of police departments. Algorithms compute high-risk districts and potentially dangerous patterns of activity. Supporters of such systems claim that the technology helps reduce crime and distribute police resources more efficiently. And to some extent this is in fact the case. But the problem lies elsewhere. The algorithm almost always inherits the errors of the environment on which it was trained.

The Border Between Predictive and Coercive

If for decades the system received data from districts with high crime rates, it begins to see threats there more often. If a particular social group ended up in police reports more frequently, the system will pay it heightened attention. The machine knows no justice. It knows correlation.

History has already encountered similar attempts to turn criminality into a calculable quantity.

At the end of the nineteenth century, the Italian criminologist Cesare Lombroso tried to determine criminal inclination from skull structure and physiognomy. His theory was later judged pseudoscientific, yet the very idea of a "predictable criminal" turned out to be remarkably persistent. Only now, instead of compass and ruler, neural networks do the work. That is precisely why the principal risk of the twenty-first century is not associated with a revolt of the machines, as science fiction so often likes to portray. Far more dangerous is something else. A world in which the human being gradually becomes accustomed to the algorithm knowing more about him than he himself does.

The Human as Raw Material

The old industrial capitalism extracted coal, oil, metal and timber.

The new digital capitalism extracts behaviour. Every movement of a person turns into raw material for analytics. The smartphone reveals more about its owner than neighbours, colleagues and relatives combined have ever known. And most people do not even imagine the scale of this process. When a person wakes up in the morning and picks up his phone, the system already begins to register dozens of parameters. Time of screen activation. Speed of finger movement. Geolocation. Ambient light level in the room. Frequency of app use. Length of pauses between actions. At first glance, all this looks like technical noise. But it is from precisely such noise that the digital model of an individual is built.

According to a Mozilla Foundation study, a significant share of popular mobile apps collects far more information than is necessary for their direct function. The phone tracks a person's speed of movement, sleep regime, level of activity, social circle and habitual routes. Even with Bluetooth turned off, it is possible to build a map of movement inside buildings. Particularly valuable are not the conversations and messages themselves, but the metadata. Who called. When. From where. How long the call lasted. How often the contact recurs. At what time of day the person is most active.

The former director of the NSA, Michael Hayden, once uttered a phrase that has since become almost an aphorism: "We kill people based on metadata."

What matters in this phrase is not the military side. What matters is the admission of scale. Metadata have ceased to be technical refuse. They have become the digital skeleton of the personality.

Economic historians like to say that oil was the principal resource of the twentieth century. But oil at least had to be extracted from the earth. Behavioural data the human being extracts from himself. And the paradox of our time is that most people do this voluntarily. They publish their travel routes. They surrender biometric data. They document their own habits. They tell algorithms about their tastes, fears, political views and emotional reactions.

Why the Human Operator Is Still Required

Never before has civilisation produced so detailed an archive of itself. At the start of the twentieth century, intelligence services spent years to build a psychological portrait of a single individual. Today an advertising platform can build such a profile in a few weeks. Without interrogations, without surveillance, without recruiting agents. It is enough that the person himself carries a surveillance device in his pocket. That is precisely why the personal security of the future is less and less linked to the classical understanding of protection. The problem is no longer only whether your data can be stolen. The problem is that your very identity is gradually becoming an object of continuous computation.

Deepfake and the End of Trust

In the spring of 2024 a financial officer of an international company in Hong Kong transferred about twenty-five million dollars to fraudsters. The cause was a video call. On the screen were his colleagues and the company's leadership. Everything looked entirely authentic. The voices matched. The expressions matched. The intonations matched. The people on the screen were discussing the details of work processes, internal documents and the company's financial operations. The only problem was that all the participants in the conference, apart from the officer himself, had been generated by artificial intelligence. The event was symbolic. Humanity entered an age in which one can no longer unconditionally trust one's own eyes. For centuries civilisation rested on a fairly simple principle: to see is to be sure. The photograph was considered evidence. The video was perceived as confirmation of fact. A person's voice was a part of his identity. Now all of this is beginning to collapse.

Deepfake is changing the very nature of evidence. Once, a swindler had to forge signatures and documents. Then came doctored photographs. Today a few minutes of video and open-source software are enough to create a convincing digital copy of a person. Particularly dangerous is the fact that synthetic-reality technologies are rapidly becoming cheaper.

In the past, a serious operation to discredit someone required the resources of a special service or a large media organisation. Today the same task can be accomplished by a person with a laptop and access to a neural network.

But the problem of deepfake is far broader than ordinary fraud. It is a matter of the gradual destruction of the very mechanism of public trust. If a photograph can be doctored, a video synthesised, and a voice copied, society begins to lose the basis for confirming reality.

Historians of technology like to compare the digital revolution to the invention of the printing press. But perhaps a different comparison is more accurate. Deepfake is the moment when civilisation loses its monopoly on reality. In the years to come humanity will, in all likelihood, encounter a new form of information war. Not a war for territory. Not a war for resources. A war for authenticity.

Imagine the situation: a video appears online in which a politician declares a state of emergency. Or a military commander issues an order. Or a major entrepreneur confesses to financial crimes. Even if the recording turns out to be fake, the very fact of its appearance is enough to provoke consequences — panic, a financial crash, reputational ruin. This is one of the most dangerous features of the age of artificial intelligence.

Once, lying took time. Now a few hours are enough for synthetic reality to begin influencing the real decisions of millions of people. And here another paradox arises. The more sophisticated the technologies of forgery become, the less people trust anything at all. An age sets in in which a human being is forced to doubt even what is obvious. And a society incapable of telling truth from a high-quality simulation becomes extremely vulnerable.

That is why the personal security of the future will increasingly be tied not only to the protection of data, but also to the protection of the identity itself as a demonstrable reality.

Sources: Stanford University, psychometric analysis of digital behavior Carnegie Mellon University, behavioral fraud prediction systems Transport for London analytics reports Transport for London analytics reports Brennan Center for Justice; Chicago Crime Lab Mozilla Foundation, Privacy Not Included reports Michael Hayden interview, 2014 Hong Kong Police Force reports on deepfake fraud cases